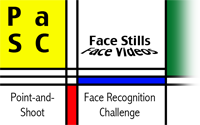

Overview of Competition

Person recognition in video remains a relatively unexplored problem with many open

challenges and questions. This is particularly true for videos of people carrying out

activities, as opposed to people speaking to a camera. This evaluation will include videos

from the Point-and-Shoot Face Recognition Challenge (PaSC) and the Video Database of

Moving Faces and People (VDMFP) collected at the University of Texas at Dallas.

The PaSC data set was collected at the University of Notre

Dame and includes multiple videos of 265 people carrying out relatively ordinary actions

such as picking up an object or throwing a ball. This data set was gathered to spur the

development of algorithms that find the relevant information and recognize the people in

videos where people are engaged in some activity not directly associated with the camera.

There is a strong presumption that much of the current work on this task will involve face

recognition, but it is not a requirement.

The PaSC videos were used in the IJCB 2014 Handheld Video Face and Person Recognition Competition and the FG 2015 Video Person Recognition Evaluation. The VDMFP videos were

used in the Video Portion of the Multiple

Biometrics Grand Challenge (MBGC). Importantly, human performance benchmarks exist for both the PaSC video challenge and the VDMFP.

There are two innovations over previous PaSC-based competitions. The first measures

algorithm performance on two video datasets collected at different institution. By incorporating

two qualitatively different dataset, the competition will measure the ability of algorithms

to generalize across datasets. The second will compare human and algorithm performance

on videos on two datasets.

Update on UTD problem: The UTD problem consists of one similarity matrix and corresponding mask matrix. The source matrices can be found on the support page. To compute the similarity matrix, compare all the videos in the target set against all videos in the query set. To compute performance use the UTD mask matrix.

Call for Participation [PDF]

Important Dates:

Evaluation announcement: February 1, 2016

First round similarity matrices delivered and summary of approach given to Notre Dame: April 15, 2016

Final similarity matrices delivered to Notre Dame and option to supply modified approach description: May 4, 2016

Updated report delivered to BTAS 2016: May 10, 2016

Final notification of BTAS 2016 decision on report: June 10, 2016

Organizers:

Patrick J. Flynn (Notre Dame)

Walter J. Scheirer (Notre Dame)

P. Jonathon Phillips (NIST)